The AI Productivity-Quality Paradox: Why Are We Building Faster But Worse?

The Tony Stark Principle and Architecture-First Development Framework

Download this framework (whitepaper) as a PDF: [Download PDF]

The software development world is experiencing a productivity surge. AI coding assistants promise to accelerate development, democratize programming, and solve the perennial challenge of software delivery speed. Yet beneath the velocity metrics and lines-of-code celebrations, a more troubling pattern is emerging: code quality is declining, architectural debt is compounding, and the conditions that previously built architectural intuition are disappearing.

The problem is not that AI coding tools don't work. The problem is that they work too well - amplifying whatever architectural understanding developers bring to them, whether strong, weak, or absent entirely.

Multiple recent studies document this AI coding paradox: productivity metrics rise while code quality declines. GitClear's analysis of 211 million lines of code shows an 8-fold increase in code duplication and a 17% decrease in code reuse. Google's DORA report correlates a 25% increase in AI adoption with a 7.2% decrease in delivery stability. Security research reveals that up to 62% of AI-generated code contains design flaws or vulnerabilities.

This challenge is already being recognized by organizations achieving success with AI. John Roese, Dell's Global CTO, whose AI initiatives have delivered billions in ROI, recently told universities what organizations need from graduates: "I need you to produce people that...really understand good software architecture and how to build a system...without necessarily having to write the code themselves, because that's the skill you're going to need."[13] His metaphor is telling: developers must become "composers of software" who design what systems should be, rather than musicians who play the instruments. This is precisely the shift from coding to architecting that this analysis explores.

What these studies measure is clear. What they don't explain is causal: why is this happening, and what should organizations do about it?

To answer this, I analyzed the empirical findings from GitClear, Google DORA, and leading security researchers to identify patterns in AI code quality decline. I then examined the gap: what these studies reveal versus what they leave unexplained. Drawing on 25+ years of organizational transformation experience and software architecture principles, I synthesized these data points into a diagnostic framework that explains the root cause.

This analysis yields three contributions:

the Tony Stark Principle (a decision heuristic for AI-assisted development),

an organizational maturity model (showing the path from naive AI adoption to architecture-first practices), and

a practical transformation roadmap for organizations seeking to address this challenge.

THE COMPETENCE INVERSION

Analysis of recent studies reveals a troubling trend: while developer productivity metrics are rising, code quality issues are increasing.[1,4] Submissions contain more bugs, security vulnerabilities, poor abstractions, and architectural anti-patterns. The initial reaction might be to blame the AI tools themselves, but the root cause runs deeper.

Many developers find themselves using AI tools without the architectural context needed to direct them effectively. When organizational pressures prioritize velocity over design, developers delegate implementation to AI without fully articulating the system context, constraints, and failure modes that should guide that implementation. The result is code that compiles, passes basic tests, and looks plausible but lacks the architectural integrity required for maintainable, scalable systems.

This is not a new skills gap. It is an exposure of a long-standing organizational failure to invest in architectural capability.

For years, many developers have not had the opportunity to develop deep architectural judgment, not because they lack the ability, but because organizations have not created time, incentives, or pathways for this growth. Manual coding was slow enough that struggling through implementation naturally built implicit understanding of system design. AI coding tools remove that friction and with it, the incidental learning opportunity that masked the absence of intentional architectural development.

THE AI AMPLIFICATION EFFECT

AI coding tools are skill amplifiers, not skill replacements. Or as industry observers have noted, AI is great at tactics but struggles with strategy. They multiply whatever architectural competence a developer possesses:

Strong architectural judgment → AI accelerates excellent outcomes

Weak architectural judgment → AI accelerates mediocre outcomes

No architectural judgment → AI generates plausible-looking technical debt

Consider the types of issues appearing in AI-assisted code. They are rarely syntax errors. AI handles syntax well. Instead, we see:

Poor abstraction boundaries that create brittle coupling

Inadequate error handling that fails in production

Missing edge cases that create security vulnerabilities

Performance anti-patterns that don't scale

Brittle integration points that cascade failures

These are architectural judgment failures. They stem from developers not understanding the system context where code will live, not from AI generating bad syntax.

This amplification effect operates at an organizational scale. Dell's CTO John Roese learned this when evaluating AI projects: "Does this AI project actually build on the way we want to run the business in the future? Or is it a big blanket that we're throwing over a giant mess to hide bad processes, bad structures?" His rule: even if an AI tool promises cost savings, if it hides structurally unsound practices rather than fixing them, don't implement it. "That's just a crutch. It's not going to be sustainable."[13] This applies equally to code architecture. AI tools amplify whatever foundation you provide. If that foundation is architecturally sound, AI accelerates quality outcomes. If the foundation is flawed, AI accelerates technical debt.

Consider a common example: A developer receives a user story to create an API endpoint that returns team performance metrics. They prompt AI: "Create a REST endpoint that returns metrics for a given team ID." AI generates functional code that queries the database and returns JSON. The code works. Tests pass. It ships.

What's missing? Authorization checks. Any authenticated user can now view any team's confidential metrics by changing the team ID in the URL. This is a critical access control vulnerability - one of the most common security flaws in modern applications. A developer with architectural context would have recognized this as a "resource access pattern" requiring permission verification within the organization's role-based access control model. They would have prompted AI with those constraints: "User must be a manager of the requested team. Use our canAccessTeam() function. Return 403 if unauthorized. Follow the pattern from our existing team endpoints." The AI would then generate secure code. The vulnerability exists not because AI is bad at security, but because the developer didn't provide the architectural context about security boundaries, existing patterns, and access control requirements.

THE TONY STARK PRINCIPLE

We barely see Tony Stark writing code. He usually designs and architects. He directs JARVIS to implement his vision because he deeply understands systems, constraints, materials science, energy dynamics, and failure modes. He earned that ability through mastery, not by skipping fundamentals.

The Tony Stark Principle states: "You cannot effectively direct AI to code what you cannot architect yourself."

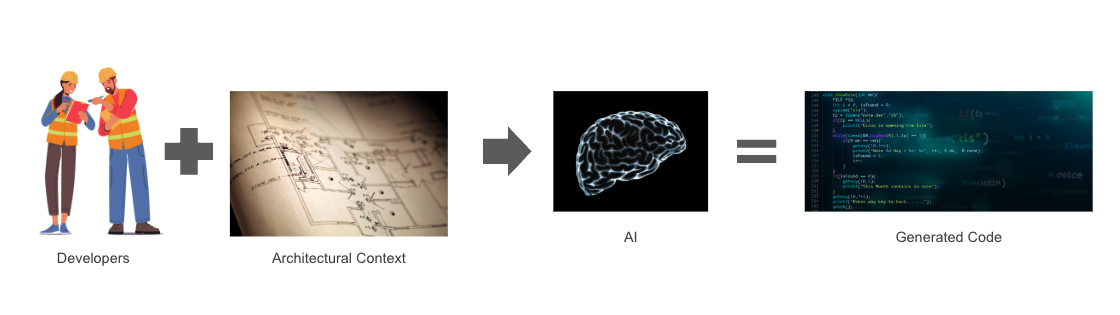

Figure 1: The Tony Stark Principle in Action: Developer architectural understanding combined with system design enables AI to generate quality code. Without architectural context, AI generates plausible-looking technical debt.

As illustrated in Figure 1, the quality of AI-generated code depends entirely on the architectural context provided by the developer. The developer's understanding (brain) combined with explicit system design (blueprints) guides AI to produce sound implementations.

This principle explains why some developers thrive with AI tools while others struggle. The difference is not coding skill - it's architectural context.

Developers who understand system design, can articulate constraints, and evaluate trade-offs use AI as a force multiplier. Those who have not yet developed this architectural understanding, or work in organizations that don't create space for it, find that AI tools amplify uncertainty rather than capability.

By "architecture," we mean the software architecture domain - the technical discipline that translates requirements into sound system design. This includes understanding design intent, recognizing relevant constraints, evaluating tradeoffs, and applying engineering principles at the appropriate level of abstraction. It's the critical step that comes after product managers define functional and non-functional requirements, but before implementation begins. This is where developers reason about how to build, not just what to build.

This is not a call for big upfront design or waterfall planning. Architecture evolves iteratively alongside implementation in agile development. But at each iteration, someone must make architectural decisions: which patterns to apply, what abstractions make sense, how this integrates with existing systems, what tradeoffs matter. AI tools don't eliminate this thinking. They expose when it's absent. Whether architecture is emergent or planned, flexible or rigid, initial or mature, developers still need architectural reasoning skills at the moment they're directing AI to generate code. The question isn't whether to architect. It's whether developers can think architecturally at the appropriate level for each decision.

THE ROOT CAUSE CHAIN

Synthesizing these findings with organizational transformation experience reveals a predictable sequence:

1. AI tools generate code without requiring deep understanding from the developer

2. Developers submit AI-generated code that works in isolation and sometimes even passes integration testing

3. Code contains architectural issues because the developer did not provide proper constraints to the AI

4. This happens because the developer

a) doesn't articulate the design intent, constraints, and tradeoffs,

b) doesn't understand the architectural implications of the requirements, or

c) lacks the architectural thinking skills to translate requirements into sound design decisions

5. These root causes exist because they have not invested time learning the architecture or developing their architectural skills

6. They are not investing that time because organizations reward code velocity, not design quality

7. Code volume increases while architectural debt compounds

Organizations celebrate productivity gains measured in story points and commit frequency while architectural erosion quietly undermines system sustainability. The technical debt accumulates faster than ever because AI does not just accelerate coding. It accelerates architectural mistakes.

THE ORGANIZATIONAL MATURITY CURVE

Organizations progress through five distinct stages in their AI adoption journey. Understanding these stages helps leaders assess their current state and plan their path forward.

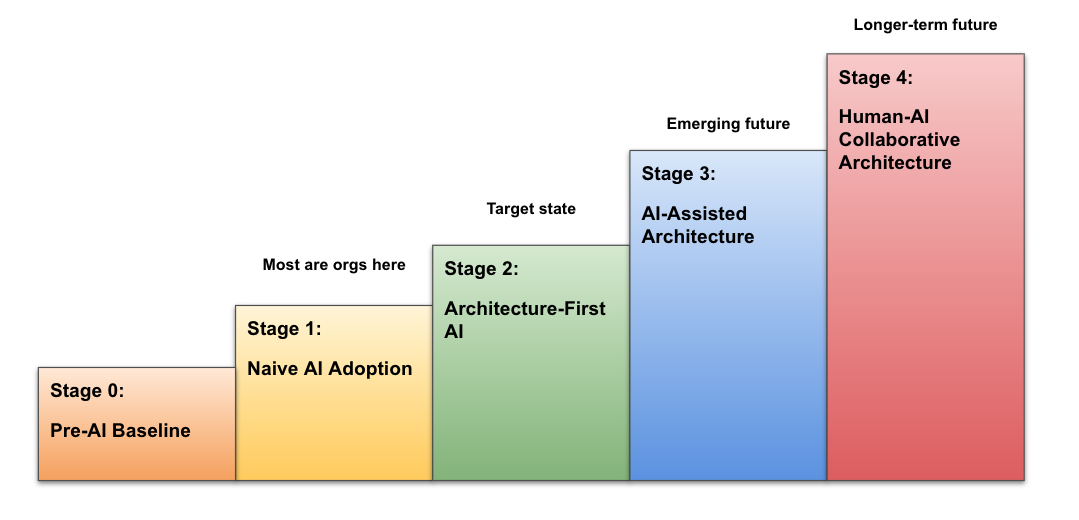

Figure 2: The Organizational Maturity Model: Most organizations are currently at Stage 1 (Naive AI Adoption). Stage 2 (Architecture-First AI) is the target state that enables sustainable productivity gains.

As shown in Figure 2, organizations must progress from Stage 1 (Naive AI Adoption) to Stage 2 (Architecture-First AI) to achieve sustainable quality outcomes.

Stage 0: Pre-AI Baseline

Developers write code manually. Some have architectural skills, some don't. Quality varies but traces to individual competence. Learning happens through implementation struggle.

Stage 1: Naive AI Adoption (Most organizations are here)

Developers use AI copilots to code faster. Architectural skills remain unchanged. Code volume increases, architectural debt compounds faster. Quality issues emerge at scale.

Stage 2: Architecture-First AI (The target state)

Developers focus on design, constraints, and system context first. AI handles implementation within well-defined boundaries. Code quality improves because architectural thinking leads the process. The Tony Stark + JARVIS model.

Stage 3: AI-Assisted Architecture (Emerging future)

AI begins to help generate architectural options and tradeoff analysis. AI identifies patterns and anti-patterns in proposed designs. Humans evaluate, select, and refine AI-generated architectures based on business context, organizational constraints, and risk tolerance. This stage only works if humans have developed deep architectural competence in Stage 2. Without it, organizations simply automate architectural failure at scale.

Stage 4: Human-AI Collaborative Architecture (Longer-term future)

AI and humans co-create architecture in tight feedback loops, with AI handling pattern matching, constraint checking, and option generation while humans provide business strategy alignment, risk assessment, and organizational context. The evaluation problem persists at a higher level of abstraction. Humans still need deep architectural judgment to direct and assess AI-generated designs. Organizations that skipped investing in Stage 2 capabilities find themselves unable to effectively use AI architectural tools.

The gap between Stage 1 and Stage 2 requires organizational transformation, not just tool adoption. It demands a fundamental shift in what developers do, how they are evaluated, and what capabilities organizations build.

THE SHIFT REQUIRED: FROM CODING TO ARCHITECTING

With AI handling implementation details, developers must shift focus to higher-value activities.

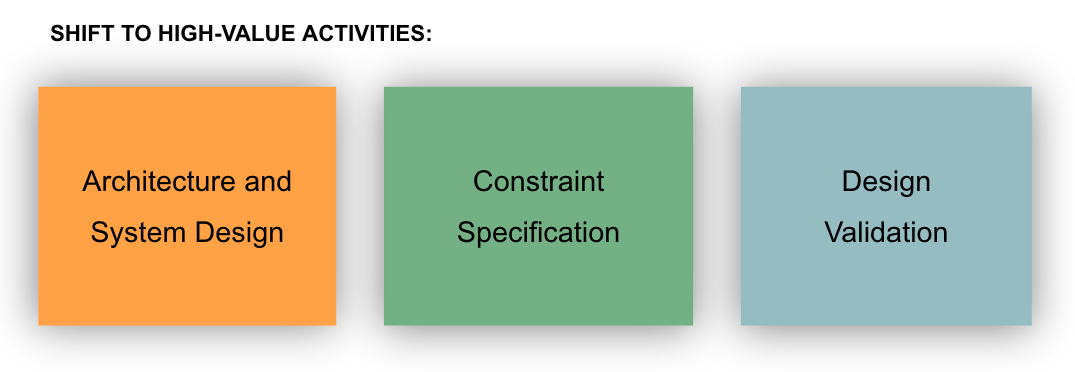

Figure 3: Shift to High-Value Activities: Organizations must redirect developer effort toward architectural thinking: system design, constraint specification, and design validation.

Figure 3 illustrates the critical shift in how developers spend their time. Rather than focusing primarily on syntax and implementation, they must invest in architecture and system design, constraint specification, and design validation.

Architecture and System Design

Identifying necessary components and their interactions

Defining clean abstraction boundaries

Simplifying dependencies and managing complexity

Anticipating failure modes and designing resilience

Understanding performance characteristics and scaling constraints

Constraint Specification

Articulating architectural intent before implementation

Defining non-functional requirements clearly

Specifying integration patterns and contracts

Establishing security and compliance boundaries

Design Validation

Evaluating AI-generated code against architectural principles

Identifying subtle issues in abstractions and coupling

Ensuring consistency with system-wide patterns

Validating performance and security characteristics

This is not about abandoning coding or requiring everyone to become system architects. Rather, it is about ensuring developers at all levels understand architecture sufficiently to use AI tools effectively. Senior engineers and architects design system-level architecture. Mid-level developers need architectural literacy to make implementation decisions that align with that design. Junior developers must learn to recognize architectural patterns and work within defined constraints. The cycle between architecture and coding remains iterative, but the emphasis shifts toward architectural understanding at every level.

WHY THIS MATTERS NOW

The architectural capability gap is already visible. In organizations that haven't created space for architectural development, developers report:[10,11]

Difficulty explaining their code's architectural rationale

Reduced ability to debug complex system interactions

Weakening grasp of fundamental design patterns

Growing dependence on AI for basic design decisions

Meanwhile, organizations face:

Mounting technical debt from architecturally unsound code

Increasing maintenance costs as systems become brittle

Growing security vulnerabilities from poor design

Difficulty scaling systems built without architectural rigor

The productivity gains are real, but they come at a hidden cost: accelerated architectural decay.

Without intervention, organizations will build faster while building worse - creating systems that are expensive to maintain, difficult to evolve, and prone to catastrophic failure.

THE TRANSFORMATION ROADMAP

Organizations cannot solve this with tools or training alone. It requires systematic transformation across practices, metrics, and culture.

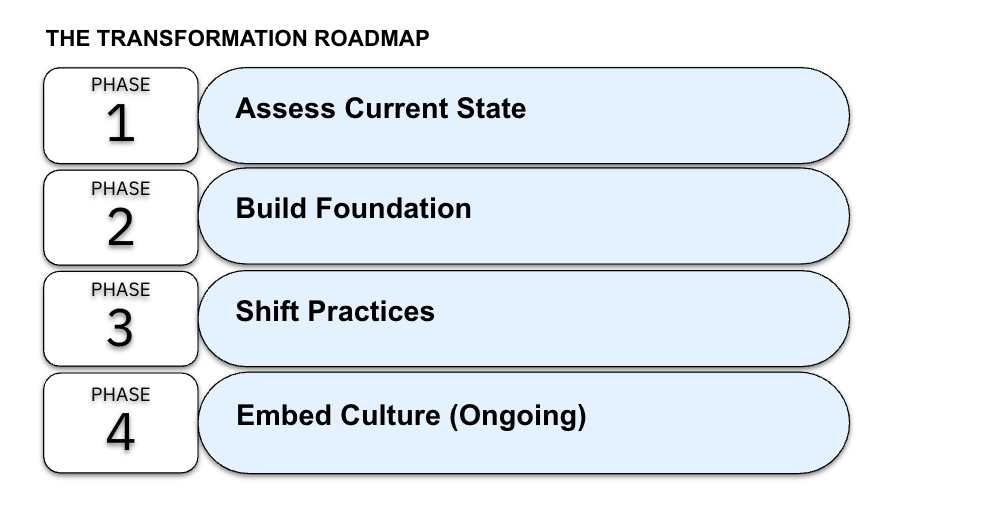

Figure 4: The Four-Phase Transformation Roadmap: A practical pathway from assessment to embedded architectural culture.

Figure 4 outlines the four phases of transformation. Each phase builds on the previous one, creating sustainable organizational change.

While presented sequentially, this transformation roadmap is intentionally iterative. Organizations need not complete one phase before beginning the next. Assessment continues throughout implementation. Foundation-building overlaps with early practice shifts. Cultural embedding starts during tactical changes, not after them.

Think of these phases as evolving areas of emphasis rather than strict sequential stages. Agile teams, in particular, will recognize this pattern: you build architectural capability in the same iterative way you build products - through continuous learning, adaptation, and refinement.

Phase 1: Assess Current State

Audit architectural practices and capabilities

Identify skills gaps in design thinking across the organization

Baseline code quality metrics, especially architectural issues

Evaluate current use of AI coding tools and outcomes

Phase 2: Build Foundation

Implement architecture training programs focused on system design thinking

Establish design documentation standards and templates

Create architectural review rituals and decision-making processes

Define "definition of ready" for AI-assisted coding tasks

Develop architectural fitness functions and automated checks

Phase 3: Shift Practices

Require developers to articulate architecture before implementation

Position AI tools as implementation accelerators within architectural constraints

Refocus code reviews on architectural alignment and design quality

Shift metrics from velocity to architectural quality and sustainability

Adjust performance evaluation to reward design thinking

Phase 4: Embed Culture (Ongoing)

Make architectural thinking a core competency at all levels

Update promotion criteria to emphasize design skills

Recognize AI tools as amplifiers requiring strong fundamentals

Build communities of practice around architecture excellence

Continuously evolve architectural standards and patterns

This transformation requires executive sponsorship, resource investment, and patience. The benefits (higher quality systems, reduced technical debt, more capable developers) accrue over time, not weeks.

PRACTICAL IMPLEMENTATION

What does this look like in practice? While these recommendations require organizational support and enabling conditions, here are the practical steps at each level:

For Individual Developers:

Before asking AI to generate code, sketch the design and constraints

Articulate architectural intent in comments or design docs first

Use AI to implement within defined boundaries

Invest time studying system design and architecture patterns

Practice evaluating code for architectural quality, not just correctness

For Engineering Leaders:

Require architectural decision records for significant changes

Establish design review checkpoints before implementation

Recognize and reward architectural thinking in performance reviews

Provide architecture training and mentorship opportunities

Create space for design work in sprint planning

For Organizations:

Redefine developer role expectations around architecture

Invest in architecture capability building programs

Measure architectural quality alongside delivery velocity

Build architectural review into CI/CD pipelines

Create career paths that value design excellence

THE CONTRARIAN POSITION

This perspective challenges the prevailing narrative around AI coding tools. The mainstream view celebrates democratization: "Anyone can code now! AI removes barriers to software development!"

The reality is more nuanced: AI coding tools require more architectural skill, not less. They expose inadequate design thinking that was previously hidden by the slow pace of manual coding. They are a magnifying glass on competence gaps that always existed.

Treating AI as a democratizing force without investing in architectural capability is like providing industrial manufacturing equipment without engineering training and support. The tools work, but sustainable outcomes require proper foundations.

Some organizations may attempt to sidestep architectural capability development by implementing Retrieval-Augmented Generation (RAG) systems that feed codebases and documentation to AI tools. While such systems provide valuable context, they cannot replace human architectural judgment. Evaluation, trade-off decisions, and novel problem-solving still require developers who think architecturally.

Organizations that recognize this truth early and invest in architectural capabilities before scaling AI adoption will build more sustainable systems faster. Those that chase productivity metrics while architectural competence erodes will accelerate themselves toward unmaintainable complexity.

THE PATH FORWARD

The AI coding revolution is real and unstoppable. The question is not whether to adopt these tools but how to wield them responsibly. The answer requires elevating the developer role from coding to architecting: from tactical implementation to strategic design.

Developers must become like Tony Stark: deeply knowledgeable about systems, capable of articulating architectural vision, able to direct AI implementation within clear constraints. Organizations must support this evolution by changing what they measure, what they reward, and what they build capacity for.

The architecture crisis is solvable, but only if we acknowledge its root cause: AI tools amplify whatever architectural understanding we bring to them. To harness their power, we must first build the architectural competence they require.

Some argue that AI will eventually generate architecture too, making human architectural skill obsolete. This perspective misunderstands the fundamental dynamic. AI architectural tools - when they arrive - will be architecture skill amplifiers, just as coding tools are code skill amplifiers. Without the judgment to evaluate AI-proposed architectures, to understand their trade-offs, and to assess their fit with business context and organizational constraints, companies will simply automate architectural mistakes at a catastrophic scale.

The window to build architectural capability is closing, not opening. Organizations that wait for AI to solve their architecture problems will find themselves unable to effectively use AI architecture tools when they emerge.

The choice is clear: evolve developers into architects, or watch AI accelerate our path to unmaintainable systems. The productivity gains are too valuable to abandon, but they demand a fundamental transformation in how we think about software development.

This framework - the Tony Stark Principle, the organizational maturity curve, and the transformation roadmap - emerges from synthesizing empirical data on AI code quality with organizational transformation experience. The studies show us what is happening. Transformation principles explain why. Together, they reveal what organizations must do.

Tony Stark architects more than code. It's time developers made the same shift.

ABOUT THE AUTHOR

Mary Ann Belarmino brings 25+ years of hands-on and leadership experience across product management, software engineering, quality engineering, and digital transformation. She has held global leadership roles focused on product quality and customer outcomes, with deep expertise in SDLC, program management, and organizational change.

She holds a BS in Applied Physics and MS graduate-level education in Electrical Engineering, with executive education from Wharton, MIT, and Harvard. She applies product management thinking to organizational challenges: identifying market gaps, synthesizing research, and developing actionable frameworks.

Her work on transformation strategy draws from years of digital transformation experience, and exploring parallels between historical technology shifts and current AI adoption patterns.

Download this white paper here.

REFERENCES

[1] GitClear (2024). AI Copilot Code Quality: 2025 Data Suggests 4x Growth in Code Clones. Analysis of 211 million changed lines of code, 2020-2024. Available at: https://www.gitclear.com/ai_assistant_code_quality_2025_research

[2] GitClear (2023). Coding on Copilot: 2023 Data Suggests Downward Pressure on Code Quality. Analysis of 153 million changed lines of code. Available at: https://www.gitclear.com/coding_on_copilot_data_shows_ais_downward_pressure_on_code_quality

[3] Google (2024). DevOps Research and Assessment (DORA) 2024 Report. Findings on AI adoption correlation with delivery stability.

[4] Cotroneo, D., Improta, C., Liguori, P. (2025). Human-Written vs. AI-Generated Code: A Large-Scale Study of Defects, Vulnerabilities, and Complexity. arXiv:2508.21634. Available at: https://arxiv.org/abs/2508.21634

[5] Center for Security and Emerging Technology, Georgetown University (2024). Cybersecurity Risks of AI-Generated Code. Issue Brief, November 2024. Available at: https://cset.georgetown.edu/wp-content/uploads/CSET-Cybersecurity-Risks-of-AI-Generated-Code.pdf

[6] Cloud Security Alliance (2025). Understanding Security Risks in AI-Generated Code. July 2025. Available at: https://cloudsecurityalliance.org/blog/2025/07/09/understanding-security-risks-in-ai-generated-code

[7] Researchers (2025). Assessing the Quality and Security of AI-Generated Code: A Quantitative Analysis. arXiv:2508.14727. Analysis of 4,442 Java coding assignments. Available at: https://arxiv.org/abs/2508.14727

[8] National Center for Biotechnology Information (2024). A systematic literature review on the impact of AI models on the security of code generation. Available at: https://pmc.ncbi.nlm.nih.gov/articles/PMC11128619/

[9] CrowdStrike (2025). Security Flaws in DeepSeek-Generated Code Linked to Political Bias. Counter Adversary Operations research, December 2025. Available at: https://www.crowdstrike.com/en-us/blog/crowdstrike-researchers-identify-hidden-vulnerabilities-ai-coded-software/

[10] Stack Overflow (2024). Developer Survey data on AI tool usage and developer practices. Available at: https://survey.stackoverflow.co/2024/ai

[11] Harness (2025). State of Software Delivery 2025. Findings on developer time spent debugging AI-generated code. Available at: https://www.harness.io/state-of-software-delivery

[12] MITRE Corporation. Common Weakness Enumeration (CWE) framework. Available at: https://cwe.mitre.org/

[13] Roese, J. (Dell Global CTO). Multi-Agent Teams, Quantum Computing, and the Future of Work. Super Data Science Podcast, Episode 887. Available at: https://www.superdatascience.com/podcast/sds-887-multi-agent-teams-quantum-computing-and-the-future-of-work-with-dells-global-cto-john-roese

FURTHER READING

For readers interested in exploring the architectural thinking and software design principles that inform this analysis:

Larson, W. (2021). Staff Engineer: Leadership Beyond the Management Track. Explores architecture as a core competency across engineering levels.

Hohpe, G. (2017). The Software Architect Elevator: Redefining the Architect's Role in the Digital Enterprise. O'Reilly Media.

Fowler, M. Patterns of Enterprise Application Architecture (2002) and ongoing writing at martinfowler.com/architecture.

Beck, K. Ongoing commentary on software design, test-driven development, and the role of AI in development practices.

Booch, G. Perspectives on software architecture, AI augmentation, and the evolving developer role.

Majors, C. (Honeycomb). Writing on engineering practices, observability, and organizational patterns in software teams.

DOWNLOAD THE COMPLETE FRAMEWORK

Get the PDF version with:

✓ Professional diagrams and visuals

✓ Maturity model assessment tool

✓ Transformation roadmap

✓ Printable reference guide